Asking a chatbot for health advice? After the recent launch of OpenAI's ChatGPT Health service, there are several important factors to consider.

With the launch of ChatGPT Health by OpenAI, many questions have arisen about the appropriateness of turning to AI chatbots for medical recommendations.

As the number of users seeking advice from chatbots increases, it was only a matter of time before companies began offering solutions dedicated to medical issues.

OpenAI has released ChatGPT Health — an enhanced version of its chatbot that, according to the developers, is capable of analyzing users' medical data, including health records and data from wearable devices, to provide health information.

Currently, access to the program is available through a waiting list. Competing company Anthropic also offers similar features in its chatbot Claude for a select group of users.

Both companies emphasize that their technologies are not intended to replace professional medical assistance and should not be used for diagnosing. They can be useful for summarizing and clarifying complex medical test results, preparing for a doctor's visit, or identifying significant trends in health that may be hidden in medical records and statistics.

But how reliable and safe are they in analyzing health data? And should we rely on them?

Here are some important points to consider before discussing your health with a chatbot:

Chatbots can offer more personalized information than traditional internet searches

Some medical professionals and researchers who have tested ChatGPT Health and similar platforms believe that this is a step forward compared to existing methods.

Although AI systems are not perfect and can sometimes make mistakes or provide inadequate advice, the information they provide is often more tailored to the specific situation than what can be found through Google.

As Dr. Robert Wachter from the University of California, San Francisco noted: “Often the alternative is a lack of information or guessing. Therefore, if these tools are used responsibly, they can provide really useful insights.”

In countries like the UK and the US, where waiting times for doctor appointments can stretch for weeks and time in emergency departments can be lengthy, chatbots can help reduce anxiety and save time.

Moreover, they can indicate the need for urgent medical attention in the case of serious symptoms.

One of the advantages of the new chatbots is that they can tailor their responses based on the user's medical history, including medications taken, age, and previous doctor visits.

Even if you do not provide the AI with access to your medical data, Wachter and other experts recommend sharing as much information as possible to make the responses more accurate.

Do not turn to AI for alarming symptoms

Experts, including Wachter, emphasize that in some situations it is best to seek help from a doctor rather than a chatbot. Symptoms such as shortness of breath, chest pain, or severe headaches require immediate medical attention.

Even in less critical situations, patients and doctors should be cautious with AI programs, says Dr. Lloyd Minor from Stanford University.

“When making significant medical decisions or even for less important health issues, one should not rely solely on the conclusions provided by large language models,” adds Minor, dean of Stanford's medical school.

Even in cases like polycystic ovary syndrome (PCOS), where symptoms can vary, it is better to consult a doctor, as this will affect treatment choices.

Be mindful of privacy before uploading medical data

Many of the benefits offered by AI bots depend on how willingly users share personal medical information. It is important to remember that data transmitted to AI developers is typically not protected by federal privacy laws in the US that govern the handling of sensitive medical information.

The HIPAA law protects medical information and imposes penalties on medical institutions for its disclosure. However, it does not apply to companies creating chatbots.

“When a person uploads their medical record to a large language model, it is not the same as handing it over to a new doctor,” explains Minor. “Consumers should be aware that the privacy standards here are completely different.”

OpenAI and Anthropic claim that user data is stored separately and protected by additional security measures. The companies do not use medical data to train their models. Users must separately consent to the transfer of such information and can withdraw their consent at any time.

Despite the growing interest in AI, independent research on such technologies is still in its early stages. Initial results show that programs like ChatGPT perform well on medical exams but do not always interact effectively with live people.

A study from the University of Oxford involving 1,300 participants found that users of AI chatbots for information about hypothetical diseases did not make more informed decisions than those using traditional online searches or their own judgments.

In cases where AI chatbots were presented with written medical scenarios, they correctly identified the underlying condition 95 percent of the time.

“There were no problems with that,” says lead author Adam Mahdi from the University of Oxford. “All the difficulties arose during communication with real participants.”

Mahdi and his team identified numerous communication issues. People often did not provide chatbots with enough information for accurate diagnosis. In turn, AI systems frequently gave mixed responses, making it difficult for users to distinguish between correct and incorrect information.

The study conducted in 2024 did not include testing of the latest versions of chatbots, including ChatGPT Health.

A second opinion from AI may be helpful

The ability of chatbots to ask clarifying questions and extract important details from users is an area where, according to Wachter, there is still room for improvement.

“I believe they will become really effective when their approach to communicating with patients is more 'medical,' and the dialogue resembles a real conversation,” says Wachter.

Nevertheless, one way to increase confidence in the information received is to use multiple chatbots, just as patients do when seeking a second opinion from another doctor.

“Sometimes I enter the same data into ChatGPT and Gemini,” shares Wachter, referring to the AI tool from Google. “And when their responses match, I feel more confident that it is the correct answer.”

The article "Turning to a chatbot for medical advice: is it worth it?" first appeared in K-News.

Read also:

The Trump Administration Ordered Military Contractors and Federal Agencies to Cease Cooperation with Anthropic

According to CNN, the Trump administration's decision resulted from a conflict between...

AI Can Change Your Political Views

According to a conducted study, brief interaction with a trained chatbot turned out to be four...

Users are Massively Deleting ChatGPT: What is the Reason?

The author of the article is K-News. Any copying or use requires permission from the K-News...

AI Chatbots Distort Users' Reality — Research

According to a study by analysts at Anthropic, interaction with AI chatbots can, in rare but...

The Cabinet allowed the branding of livestock during microchipping: the "i" mark will be applied with liquid nitrogen

Curl error: Operation timed out after 120001 milliseconds with 0 bytes received...

Futurist: Writers Will Pay for AI to Read Them

Recent events confirm his words. For example, the AI development company Anthropic agreed to pay...

Anthropic accused Chinese companies of stealing Claude's data

According to Anthropic, the use of such methods by Chinese companies violates American export...

Tokayev: Kazakhstan has entered a new stage of modernization

Curl error: Operation timed out after 120001 milliseconds with 0 bytes received...

TikTok tracks you even if you don't use the app

Despite TikTok collecting data on its users, it is important to note that the company also gains...

AI Claude demonstrated the ability to think by solving an open mathematical problem

The author of the material is K-News. Any copying or partial use is possible with the permission of...

OpenAI Updates ChatGPT: It Now Has Eight "Characters"

The GPT-5.1 Instant model will be the primary one for most tasks in ChatGPT. It uses adaptive...

Sadyr Japarov signed the updated edition of the constitutional law "On the National Bank"

- President Sadyr Japarov approved a new edition of the constitutional law "On the National...

Iran can continue to wage war against the US and Israel "for as long as it wants." What else happened?

The material is prepared by K-News. For copying or partial use, prior permission from the K-News...

Will the Central Asia Become the Main Arena for the Struggle for Resources Between the USA, Russia, and China?

At a round table titled "Global Trends in Central Asia: From Security Provision to the...

President Sadyr Japarov responded to Atambayev's accusations

- Hello, Sadyr Nurgoyevich. We would like to get your comment regarding the recent address by...

The Person Inside the Brain: Everyone Lives in Their Own Mental Umwelt

Curl error: Operation timed out after 120001 milliseconds with 0 bytes received...

Why Pediatric Oncology Requires Special Attention from the State – An Interview with an Oncologist

– Problems do exist. Even with sufficient funding, pediatric oncology remains one of the most...

ChatGPT will determine users' age

OpenAI has announced the implementation of a new feature in ChatGPT that will determine the age of...

Australia introduces a complete ban on social media for users under 16 years old

New age restrictions, which will come into effect on December 10, will affect the largest social...

The Cabinet approved the procedure for maintaining state registers of breeding achievements and traditional knowledge.

On December 3, 2025, the Cabinet of Ministers approved Resolution No. 774, which established new...

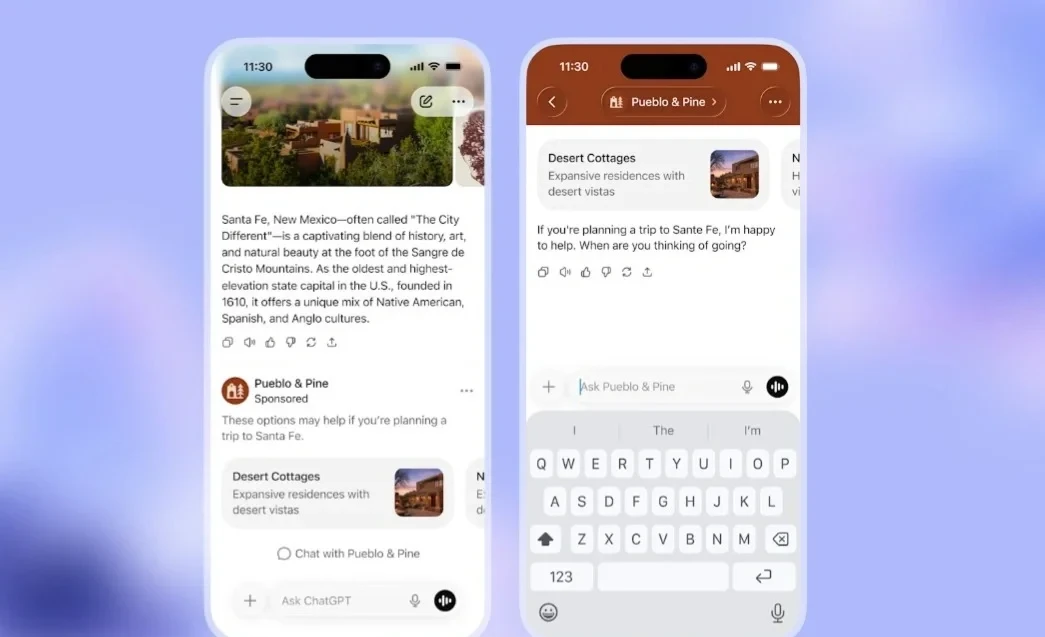

OpenAI Launches Ads in the Free Version of ChatGPT

OpenAI has announced the start of testing advertising in ChatGPT, which will be available to users...

Slavoj Žižek: Why We Are Still Alive in the Dead Internet

Slavoj Žižek emphasizes that when we hear about the control that artificial intelligence begins to...

"Sometimes Harsh, but Necessary". How Entrepreneurs Assess the Year 2025

According to the Central Bank, Kyrgyzstan is forecasting an economic growth of more than 10% by the...

Iran vs. USA: Technological Capabilities of Countries on the Battlefield

The material was prepared by K-News. Permission from the K-News editorial team is required for...

Trump threatens to send troops to the Hormuz Strait to unblock it. What else has happened in Iran?

The material was prepared by K-News. Copying or partial use is only possible with the permission of...

Elon Musk filed a lawsuit against the creators of ChatGPT for over $100 billion

Entrepreneur Elon Musk has initiated legal proceedings against OpenAI, the developer of ChatGPT,...

Insatiable Intelligence: How Much Electricity Do Neural Networks Consume

The creation of more complex and large-scale artificial intelligences has become complicated due...

Mostbet Tajikistan – A Successful Betting Company with Wide Opportunities

Mostbet offers its users a wide range of services since its inception. With a license to operate...

UNESCO warns of a serious decline in freedom of expression and journalist safety worldwide

In its main report, UNESCO recorded a significant decline in the level of freedom of expression—by...

AI Can Detect 130 Diseases While You Sleep

A new artificial intelligence model has been created that can predict the risk of serious diseases...

The creator of ChatGPT, OpenAI, releases a browser in an attempt to compete with Google.

Atlas is being introduced at a time when OpenAI is actively seeking new ways to monetize its...

Microsoft has ended free support for Windows 10. What users need to know

Microsoft has completed the free upgrade and support for Windows 10 as of October 14, according to...

AI Bots Have Their Own Social Network: What You Need to Know About Moltbook

In this social network, AI agents, which are autonomous virtual assistants, are capable of...

What the New Consumer Credit Law Is About — Text

- The President of Kyrgyzstan, Sadyr Japarov, has approved a law concerning amendments to certain...

Sadyr Japarov explained why old driver's licenses are being replaced with new ones

In Kyrgyzstan, cases of document forgery have been recorded, which has negatively affected the...

Recruitment of Volunteers for the Protection of Important Facilities Has Begun in the Regions of Russia

In Russia, an active program has begun to recruit volunteers into special units that will be...

Sam Altman predicted superintelligent AI "in a couple of years"

In his speech, Altman emphasized that such predictions require serious analysis, even if they may...

Why Esports is Becoming More Like Traditional Sports

The material is prepared by K-News. Permission from the K-News editorial team is required for...

Cardiovascular diseases remain one of the leading causes of mortality – cardiologist

According to information provided to the Kabar agency, cardiovascular diseases continue to rank...

Flirt, Erotica, and the End of Censorship. OpenAI Will Change the Rules of Communication with ChatGPT

According to Altman, this decision is aimed at respecting adult users. Previously, the company had...

"Adilet" analyzed the draft law on the confiscation of property before a court decision

On the Unified Public Discussion Portal, an analysis of the draft law "On Amendments to...

Chinese AI App Sends Hollywood into a Panic

Seedance 2.0, developed by ByteDance, allows for the generation of high-quality videos with sound...

Kickboxing. Fight for the World Champion Title

Fight for the World Champion Title among professionals in kickboxing according to WAKO-PRO rankings...