In his essay, Professor Colin Lewis argues that we are on the brink of an era where traditional methods of controlling artificial intelligence are losing their effectiveness.

The question is no longer how technologies will develop, but whether society will be able to cope with their consequences. Initially, we viewed AI as an exciting phenomenon, but it is now becoming clear that it is, in fact, a test of the resilience of our social institutions. Lewis emphasizes that we need to stop relying solely on technical limitations and start forming a "hardened society."

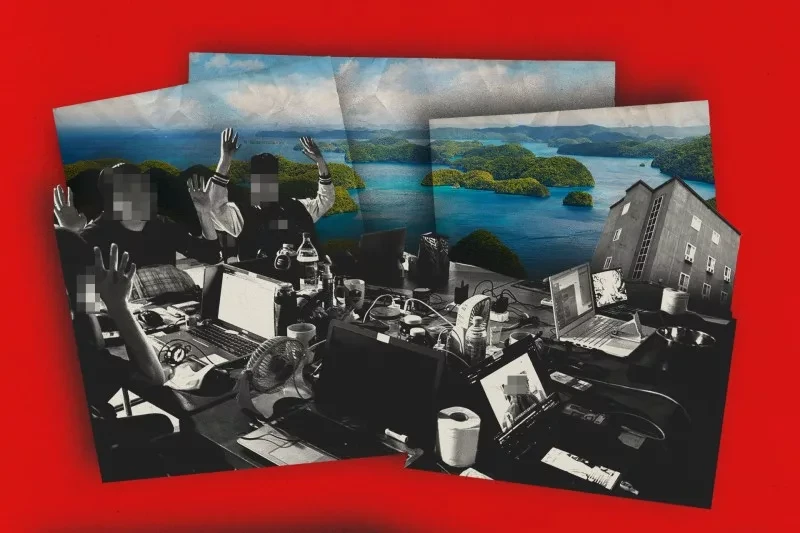

The first significant shift occurred unnoticed when "the lock at the gate was found to be broken." In 2020, the cost of training complex models reached millions of dollars, but just two years later, those expenses decreased tenfold. As Lewis notes, "even a small team with a good server can bypass the protective filters that previously required the budgets of large companies." This indicates that the old strategy of control through restricted access is no longer relevant.

This concept is detailed in a study conducted by a group of researchers led by Jamie Bernardi. Their work emphasizes that the "bottleneck" of control is disappearing, and methods like "multiple hacking" allow for bypassing restrictions even without access to the internal parameters of the models. Moreover, attempts to make systems "safe" through prohibitions only create new risks. Lewis explains that there is a "trade-off between use and abuse": if you prohibit models from studying how a virus works to prevent bioterrorism, you simultaneously deprive a new generation of doctors of a tool to combat real pandemics. And if you train AI to recognize components of explosives or biological weapons so that it can block dangerous requests, you inevitably pass this information to those looking for ways to circumvent the protection.

The danger is exacerbated by the fact that society has already adapted to this logic. "We do not ask whether AI is adapting to us," writes Lewis. "We simplify our institutions so that algorithms can pass through them more quickly." In recent years, the economy, media, and governmental processes have been digitized and standardized to be efficiently processed by machines. As a result, "we are quietly training the world to be convenient not for people, but for systems."

This is no longer just an engineering task but an important political issue. It is not about refining models, but about restructuring society. If AI is used to attack energy infrastructure, a "more ethical" AI is not needed; rather, a system capable of autonomously restoring functionality is required. If deepfakes distort elections, it is more important not to identify them but to ensure that society is ready to conduct them again. This approach shatters the illusion that the problem can be solved solely through technical means: it is now a matter of political will and the maturity of institutions. In other words, technology does not free us from the need to make political decisions; it merely makes the consequences of mistakes more severe.

From this follows the necessity of creating a "hardened" society. Systemic adaptation is required: from confirming human identity on digital platforms to developing protective AI systems and backup infrastructure for critical sectors such as energy and healthcare. This is not a bright technological future, but routine yet vital work: constant checks, backup systems, and readiness for failures.

The answer to this question depends on three factors: preserving the human right to override algorithmic decisions, creating reliable infrastructure for failures, and maintaining a constant "discipline of the cycle" — continuous identification and management of risks. Society faces a choice: to continue adapting to machines or to strengthen its institutions. "It is time to stop being spectators and start being architects," concludes the author.

The article "The Moment of Control Over AI is Missed, Now the Future Belongs to a 'Hardened Society'" first appeared on K-News.